Key areas of research

“Methods for computer vision detect cancer cells or help blind people to navigate through town, chatbots answer customer questions 24 hours a day, and self-driving cars are set to radically change our transport systems. Our research strives for excellence through practical applicability.”

Areas of application

At the CAI, we focus on machine learning and deep learning methodology. In our experience, breakthroughs in one use case tend to translate well to different domains, current AI methodology being largely sector-independent. We apply our expertise in the following areas:

Health and medicine, industry 4.0, robotics, predictive maintenance, automated quality control, document analysis, and other data science use cases in industries including manufacturing, finance and insurance, retail, transportation, digital farming, weather forecasting, earth observation and many more.

Autonomous Learning Systems

Topics:

- Reinforcement learning

- Multi-agent systems

- Embodied AI

In the field of autonomous learning systems research, we investigate the design and development of intelligent systems, specifically the kinds that create a feedback loop between perception (processing of incoming sensor data) and action (execution of actions that influence the environment to be perceived: perception-action loop). An important methodology in this context is (deep) reinforcement learning, which allows agents to learn through trial and error. In the future, this reward-based type of learning will create pathways to completely new areas of application beyond the traditional learning from pairs of input and hand-engineered output in most industries, for example in industrial production or in the field of neurotechnology. Interconnecting such systems with hardware equipped with the required sensors and actuators creates additional training potential for the algorithms of autonomous systems through physical interaction (embodiment, e.g. in a robotic device).

Project example

FarmAI – Artificial intelligence for the farming simulator

For the globally successful "Farming simulator" video game series developed by GIANTS Software GmbH, a new, continually entertaining, easily extendable game mode has been made possible through artificial intelligence (AI). In this project, reinforcement learning algorithms are used to find suitable action strategies by simulating games.

Selected publication

Combining Reinforcement Learning with Supervised Deep Learning for Neural Active Scene Understanding

While in living beings, vision is an active process whereby image acquisition and classification are intertwined to gradually refine perception, much of today’s computer vision is built on the inferior paradigm of episodic classification of i.i.d. samples. Our aim is to enable improved scene understanding for robots while taking the sequential nature of seeing over time into account. We present a combined supervised and reinforcement learning multi-task approach to answer questions about different aspects of a scene, such as the relationship between objects, their quantity or their relative positions to the camera.

Computer Vision, Perception and Cognition

Topics:

- Pattern recognition

- Machine perception

- Neuromorphic engineering

The focus of the computer vision, perception and cognition area is on generating semantic understanding from high-dimensional input. This is achieved by learning and then finding essential patterns using machine learning methodology and, specifically, deep neural networks in a data-driven way (pattern recognition). Input sources include data from images, videos and other multimedia signals, but also multi-dimensional data series from any technical and non-technical field. Methodologically, classification, semantic segmentation and object detection play a role in the analysis of the input or corresponding generative models for their synthesis (e.g. using generative adversarial networks). Biology-inspired ideas from the field of neuroscience are used to further develop the methodology (neuromorphic engineering).

Project example

RealScore - Scanning of Real-World Sheet Music for a Digital Music Stand

We have built a sheet music scanning service that ensures high quality input. To increase market penetration, we plan to extend its use to smartphone images, used sheets and other sources. Project RealScore enhances the successful precursor project by making deep learning adapt to previously unseen data through unsupervised learning.

Selected publication

Due to the methodologies vast success on a wide range of machine perception tasks, deep learning with neural networks is applied by an increasing number of actors outside classic research environments. While this interest is fuelled by exciting success stories, practical work in deep learning on novel tasks without existing baselines remains challenging. This paper explores specific challenges that arise in the realm of real-world tasks, based on case studies from research and development in conjunction with industry, and extracts the lessons learned.

Trustworthy AI

Topics:

- Trustworthy machine learning

- Robust deep learning

- AI & society

In this strategic focus area, we investigate deep learning approaches that meet the special requirements of professional (e.g. industrial or medical) practice with regard to the trustworthiness of the methods used: On the one hand, this means achieving results that are robust to slightly varying input (e.g. due to creeping changes in the environment -covariate shift, concept drift- or adversarial attacks) and robust despite small training sets (“small data” or “learning from little”, e.g. through better transfer learning and the use of self-supervised and unsupervised learning). On the other hand, it is important to make both the learned models and the training process itself explainable (Explainable AI, XAI). This serves the trust of a user or affected person in the system (Trustworthy AI). Fulfillment of regulatory (certifiability) and social requirements (e.g. avoidance of algorithmic bias) for the corresponding systems is also a subject of research in this area.

Project example

QualitAI - Quality control of industrial products via deep learning on images

QualitAI researches and develops a device for the automatic quality control of industrial products such as cardiac balloon catheters. This is facilitated through innovation in analysing camera images using deep learning, specifically in rendering the resulting model robust and interpretable.

Selected publication

Trace and Detect Adversarial Attacks on CNNs using Feature Response Maps

The existence of adversarial attacks on convolutional neural networks (CNN) raises doubts about the fitness of such models for serious applications. Such attacks can manipulate input images so that misclassification is evoked while the images continue to look normal to the human observer—they are therefore not easily detectable. In a different context, backpropagated activations of CNN hidden layers—“feature responses” to a given input—have been helpful in visualising for a human “debugger” what the CNN “looks at” while computing its output. In this paper, we propose a novel detection method for adversarial examples to prevent attacks.

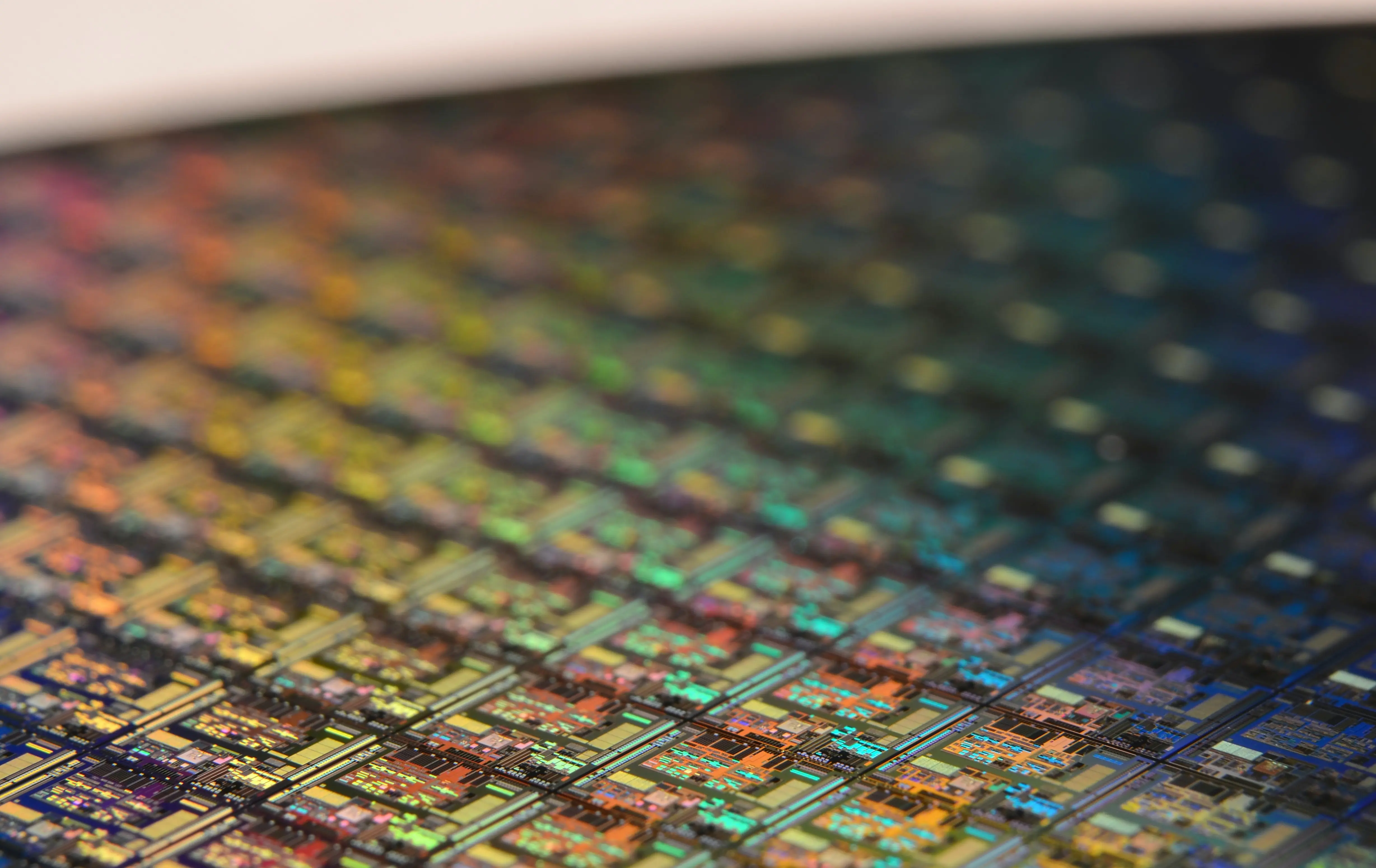

AI Engineering

Topics:

- MLOps

- Data-Centric AI

- Continuous Learning

Under this strategic focal topic, development methods and processes for the practical implementation and deployment of the methods dealt with in the other focal topics in actual systems are examined. On the one hand, this includes the development and transfer of know-how and tools that support development (development environments, debugging, etc.), operation (e.g. deployment, life cycle management) and the interactions between both in the context of machine learning systems (MLOps, GPU cluster computing, etc.). On the other hand, new ways are being sought to develop corresponding systems with a focus on optimising the available data (data-centric AI) and to provide machine learning support for the collection, processing and labelling of the data. In this context, the focus is on the further development of approaches for systems that learn continuously instead of being trained only once and then used permanently (continuous learning). The findings from this thematic focus flow continuously into open source products and demonstrators.

Project Example

Pilot study machine learning for injection molding processes

Researchers from the CAI and InES are jointly investigating the opportunities for bundling process knowledge about injection molding processes in neural networks and transferring it to new application scenarios as part of a technical deep dive. The groups of Prof. Stadelmann (Computer Vision, Perception & Cognition, ZHAW CAI) and Prof. Rosenthal (Realtime Platforms, ZHAW InES)- recently teamed up with the Kistler Innovation Lab to explore risks and opportunities for improving automatic quality control and process monitoring in plastic injection molding using advanced machine learning. In particular, transfer learning / domain adaptation and continuous learning in neural networks are being evaluated for small data scenarios. The team secured funding for a technical deep dive through the NTN Databooster from the Data Innovation Alliance and Innosuisse for a three-month study. The results will inform the design and implementation of future Kistler products in another joint project. The NTN Databooster supports data-driven innovation by helping companies find suitable research partners for their challenges, identify new solutions, assess feasibility and risks in technical depths and finally apply for appropriate funding for joint R&D projects. CAI staff members have been active in various capacities since the founding of Databooster and its supporting organisation, the Data Innovation Alliance, and have successfully completed several R&D projects in this framework.

Selected Publication

Design patterns for resource-constrained automated deep-learning methods

We present an extensive evaluation of a wide variety of promising design patterns for automated deep-learning (AutoDL) methods, organized according to the problem categories of the 2019 AutoDL challenges, which set the task of optimizing both model accuracy and search efficiency under tight time and computing constraints. We propose structured empirical evaluations as the most promising avenue to obtain design principles for deep-learning systems due to the absence of strong theoretical support. From these evaluations, we distill relevant patterns which give rise to neural network design recommendations. In particular, we establish (a) that very wide fully connected layers learn meaningful features faster; we illustrate (b) how the lack of pretraining in audio processing can be compensated by architecture search; we show (c) that in text processing deep-learning-based methods only pull ahead of traditional methods for short text lengths with less than a thousand characters under tight resource limitations; and lastly we present (d) evidence that in very data- and computing-constrained settings, hyperparameter tuning of more traditional machine-learning methods outperforms deep-learning systems.

Natural Language Processing

Topics:

- Dialogue systems

- Text analytics

- Speech Processing

The focus area natural language processing investigates the machine understanding of human speech in spoken (spoken language processing, automatic speech recognition, speaker diarization) and written form (natural language processing, text analytics) as well as the use of corresponding machine learning methods, such as transformers. This research is conducted in the context of dialogue systems and aims to enable natural language communication between humans and machines. Particular attention is paid to the development and availability of adapted methods and models for dialects and rare languages for which only limited training data is available. Further research areas include text classification (e.g. sentiment analysis), chatbots and natural language generation.

Project example

By developing child-like avatars for the training of interrogators of children, this project manages to close significant knowledge gaps regarding the effectiveness of individual training elements and personal influencing variables. The findings and the training tool can be used for education and training purposes as well as for the recruitment of personnel. The project’s findings can be used as a basis to improve interrogation practices and help to meet the international demand for a child-friendly justice system.

Selected publication

Survey on evaluation methods for dialogue systems

In this paper, we survey methods and concepts developed for the evaluation of dialogue systems. Evaluation, in and of itself, is a crucial part of the development process. Often, dialogue systems are assessed by means of human evaluation and questionnaires. However, these methods tend to be very expensive and time-consuming, which is why there have been intense efforts to find methods that reduce the involvement of human labour.

Publications

-

Schmid, Lars; von Däniken, Pius; Giedemann, Patrick; Tuggener, Don; Bühler, Judith; Kamenowski, Maria; Girschik, Katja; Baier, Dirk; Cieliebak, Mark,

2025.

HICC : a dataset for German hate speech in conversational context[paper].

In:

Proceedings of the 21st Conference on Natural Language Processing (KONVENS 2025).

21st Conference on Natural Language Processing (KONVENS 2025), Hannover, Germany, 9-12 September 2025.

Association for Computational Linguistics.

pp. 168-176.

Available from: https://doi.org/10.21256/zhaw-35860

-

Stucki, Samuel; Deriu, Jan; Cieliebak, Mark,

2025.

Voice adaptation for Swiss German[paper].

In:

Proceedings Interspeech 2025.

Interspeech 2025, Rotterdam, The Netherlands, 17-21 August 2025.

International Speech Communication Association.

pp. 4143-4147.

Available from: https://doi.org/10.21437/interspeech.2025-432

-

von Däniken, Pius; Deriu, Jan Milan; Cieliebak, Mark,

2025.

A measure of the system dependence of automated metrics[paper].

In:

Che, Wanxiang; Nabende, Joyce; Shutova, Ekaterina; Pilehvar, Mohammad Taher, eds.,

Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers).

63rd Annual Meeting of the Association for Computational Linguistics (ACL), Vienna, Austria, 27 July - 1 August 2025.

Association for Computational Linguistics.

pp. 87-99.

Available from: https://doi.org/10.18653/v1/2025.acl-short.8

-

Cieliebak, Mark; Gerber, Jonathan; Hürlimann, Manuela,

2025.

SwissCoco2025 : the Swiss Corpora Collection 2025[paper].

In:

Gerber, Jonathan; Cieliebak, Mark; Tuggener, Don; Hürlimann, Manuela, eds.,

Proceedings of the 10th edition of the Swiss Text Analytics Conference.

10th Swiss Text Analytics Conference – SwissText 2025, Winterthur, Switzerland, 13-15 May 2025.

Association for Computational Linguistics.

pp. 133-148.

Available from: https://doi.org/10.21256/zhaw-36110

-

Gerber, Jonathan; Cieliebak, Mark; Tuggener, Don; Hürlimann, Manuela, eds.,

2025.

Proceedings of the 10th edition of the Swiss Text Analytics Conference.

10th Swiss Text Analytics Conference – SwissText 2025, Winterthur, Switzerland, 13-15 May 2025.

Association for Computational Linguistics.

.

ISBN 978-1-950737-19-2.

Available from: https://aclanthology.org/2025.swisstext-1.0/